- #JPROFILER MEMORY LEAK TUTORIAL HOW TO#

- #JPROFILER MEMORY LEAK TUTORIAL CODE#

- #JPROFILER MEMORY LEAK TUTORIAL SERIES#

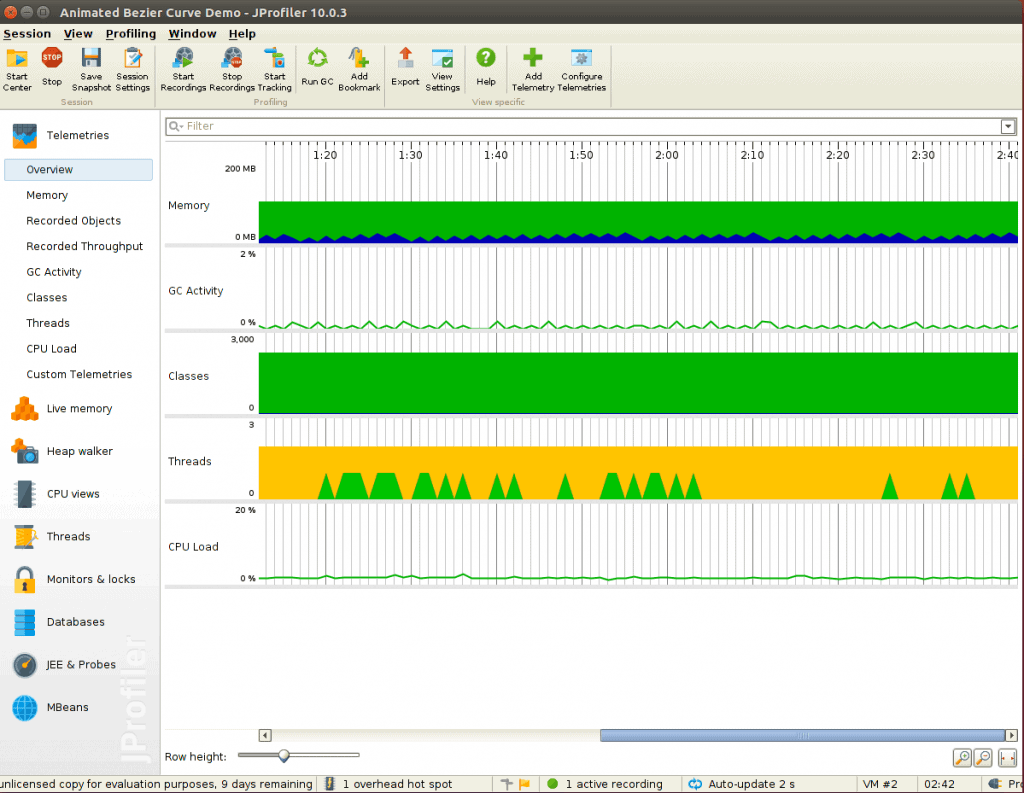

Regardless of the outcome from previous actions, the next step should always be a dynamic profiling session of the running application. Spikes or anomalies within this output can point your investigation in the right direction. Once an application with NMT enabled has started, the following command will return a comprehensive overview of the reserved and committed memory.

#JPROFILER MEMORY LEAK TUTORIAL CODE#

The second feature enabled by the listed command is called Native Memory Tracking (NMT) and is helpful to determine which memory region (heap, stack, code cache or reserved space for the GC) uses memory excessively. Note that GC logging can even be enabled for production use, since it’s impact on application performance is nearly nothing. The GC log can be analyzed by hand or visualized using a service like GCeasy, which generate extensive reports based on the logs. This log is automatically rotated at a file size of 2 MB and maintains a maximum of five log files before rotating once again. Once the JVM parameters are implemented, all Garbage Collector (GC) activities are added to a file called gclog.log within the application directory. Both options can be configured via JVM parameters as shown below. The next step in the analysis process is to enable verbose GC-logging and Native Memory Tracking (NMT) to gain additional data about the application in question. In circumstances where limits are defined within the Kubernetes environment, this metric is used to determine which Pod can be evicted in favor of another that wants to allocate more memory. RSS should be considered for monitoring along with other relevant metrics such as the working set, which includes a Linux page cache and is used by Kubernetes (and many other tools) to report memory usage. Resident Set Size (RSS) is a measurement of “true” memory consumption of a process by not including swapped pages, therefore indicating physical RAM usage. Virtual memory, however, is not necessarily a good metric to monitor, as it can be difficult to determine whether or not it’s backed by physical memory. These questions are necessary to determine the root cause of memory leaks, as the Kubernetes Pod can host multiple processes in addition to Java. Questions to support your analysis include:Ĭan the problem be verified by using another tool, script or metric? The very first step to analyzing an application suspected of having a memory leak is to verify your metrics.

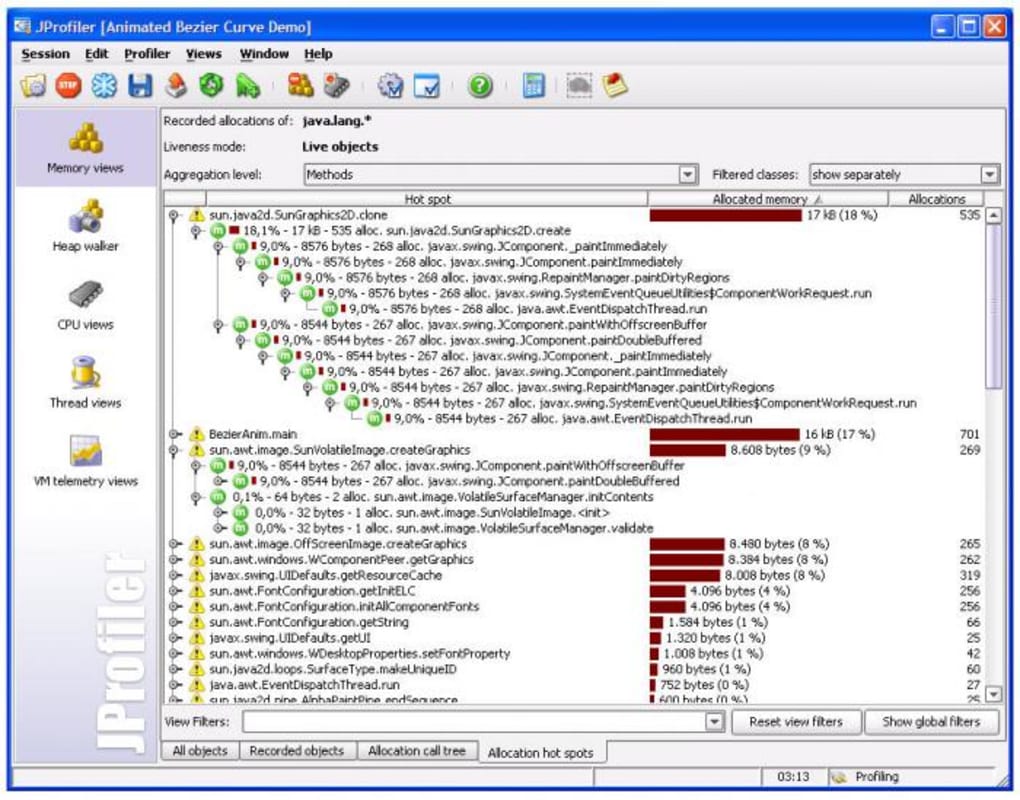

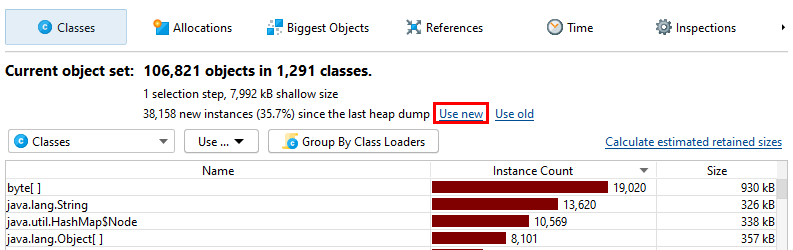

Alternatively, there are a handful of dedicated graphical profilers as well as GC-log analyzers available.

Over the years, many approaches and tools have been developed to support memory profiling Java applications use command-line programs such as: jcmd or jmap, which come with the Java Development Kit (JDK). To identify the root cause of a memory issue, developers can use a technique called memory profiling. So, keep in mind that it is not necessarily your code that is leaking. Additional leaks could be a result of issues within third-party integrations, where other leaks or unsafe calls through the Java Native Interface (JNI) are made and are hard to debug after the fact. Although, there is still a possibility that this first inspection fails, and a hazardous piece of code makes it into the application which may result in high memory usage. These programs can find potential issues such as unclosed streams, infinite loops etc. Popular solutions for this are SonarQube and SpotBugs. For the sake of early recognition, this analysis should happen at the moment a developer pushes a change to the code repository. To help with this, static code analysis must be executed and provide feedback on which pieces can be improved to prevent bugs or performance problems in the future. When it comes to preventing memory issues, questionable code should not even make it into production. So let’s dive right in! Investigating Java Memory Issues

#JPROFILER MEMORY LEAK TUTORIAL HOW TO#

In this second post we cover more in-depth facets of cloud storage as it pertains to Bitmovin’s encoding service and how to mitigate unnecessary memory loss. 1, we reviewed the basics of Java’s Internals, Java Virtual Machine (JVM), the memory regions found within, garbage collection, and how memory leaks might happen in cloud instances.

#JPROFILER MEMORY LEAK TUTORIAL SERIES#

Welcome back! In our first instalment of the Memory Series – pt.